Statistical Power and Type II Errors: Ensuring Scientific Rigor in Clinical Trials

In clinical research, a statistically non-significant result (p > 0.05) is often misinterpreted as evidence of "no effect." This assumption is dangerous when the study lacks sufficient statistical power. A failure to detect a true clinical difference due to inadequate sample size is a Type II error (Beta), which compromises the integrity of evidence-based medicine.

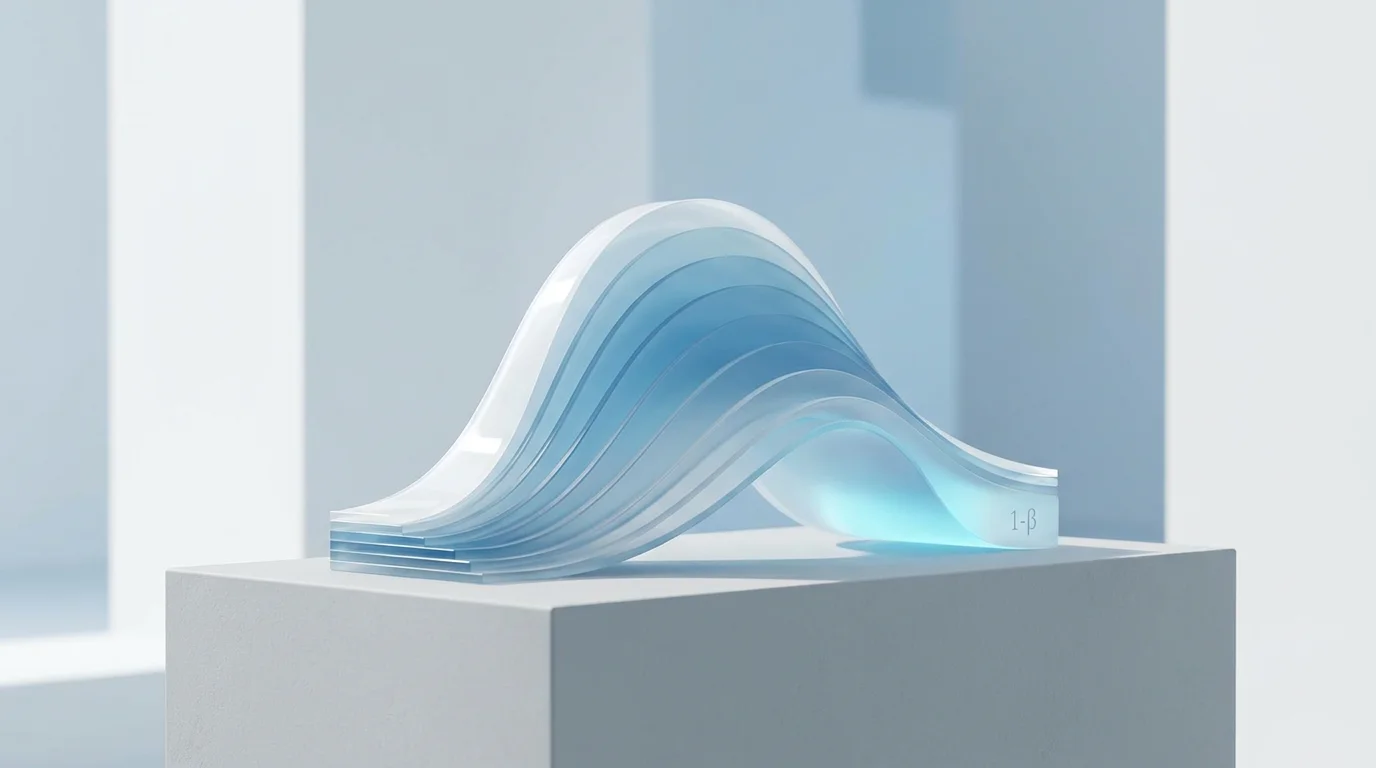

Direct Answer: Statistical power (1-beta) is the probability of correctly rejecting a false null hypothesis. Most high-impact journals require a minimum power of 80% to 90% to minimize the risk of false-negative conclusions.

Understanding the Trade-off Between Alpha and Beta

Every statistical test balances the risk of a Type I error (Alpha) and a Type II error (Beta). While Alpha is conventionally set at 0.05 to guard against false positives, Beta represents the probability of missing a real effect. Increasing the sample size remains the most effective method for reducing Beta and increasing the sensitivity of a clinical trial.

- Alpha (α): The probability of finding an effect that does not exist.

- Beta (β): The probability of failing to find an effect that *does* exist.

- Power (1-β): The capacity of a study to detect a difference if one is present.

Impact of Underpowered Studies in Peer Review

Manuscripts reporting "negative" results without a post-hoc power analysis or a predefined sample size calculation are frequently targeted for desk rejection. Reviewers look for assurance that the study was large enough to provide a definitive answer. An underpowered study results in ambiguous evidence, which wastes institutional resources and participant contributions.

Optimizing Rigor through Sample Size Planning

To avoid Type II errors, researchers must perform a rigorous sample size calculation during the protocol design phase. This calculation requires an estimation of the Effect Size (the expected difference between groups) and the variability (Standard Deviation). Choosing a realistic effect size based on pilot data or previous literature is critical for ensuring the scientific rigor of the final report.

Technical Conclusion

Statistical power is a fundamental pillar of experimental design. By prioritizing high power and transparent reporting of sample size calculations, researchers provide the clarity required for peer acceptance and clinical implementation.

LINGCORE SCI

LINGCORE SCI