AI in Systematic Reviews: Balancing Efficiency and Methodological Rigor

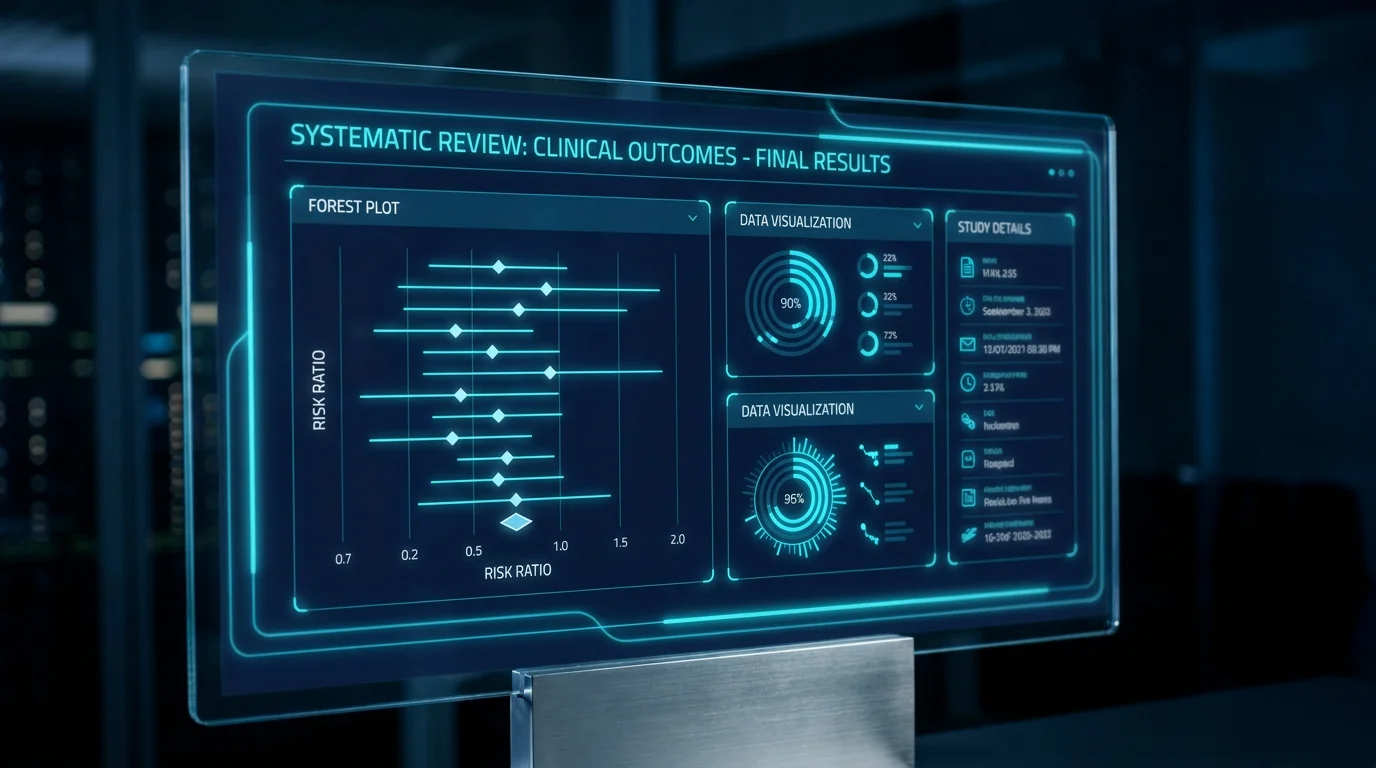

Conducting a systematic review is a labor-intensive endeavor that traditionally requires months of manual screening and data extraction. However, the integration of **Artificial Intelligence (AI)** is fundamentally altering the landscape of evidence synthesis. In 2026, researchers are leveraging machine learning algorithms to handle the "big data" aspect of literature reviews, allowing for near real-time updates to clinical guidelines while maintaining the highest levels of **scientific integrity**.

Technical Reality: While AI can reduce screening time by up to 80%, its use must be transparently reported. Adherence to the latest **PRISMA-AI** reporting guidelines is now a mandatory requirement for publication in top-tier journals to ensure that the automated steps are reproducible and free from algorithmic bias.

Automating the Screening Phase

The first and most significant impact of AI is in the title and abstract screening phase. By using active learning models, researchers can train an algorithm on a small subset of manually screened papers. The AI then ranks the remaining thousands of abstracts by relevance, allowing the human team to reach the "saturation point"—where no more relevant studies are found—much faster than traditional methods.

- Dual-Review Reliability: AI can serve as the "second reviewer" in lower-risk screening tasks, flagging discrepancies for a human expert to resolve. This maintains the double-blind standard while cutting resource requirements.

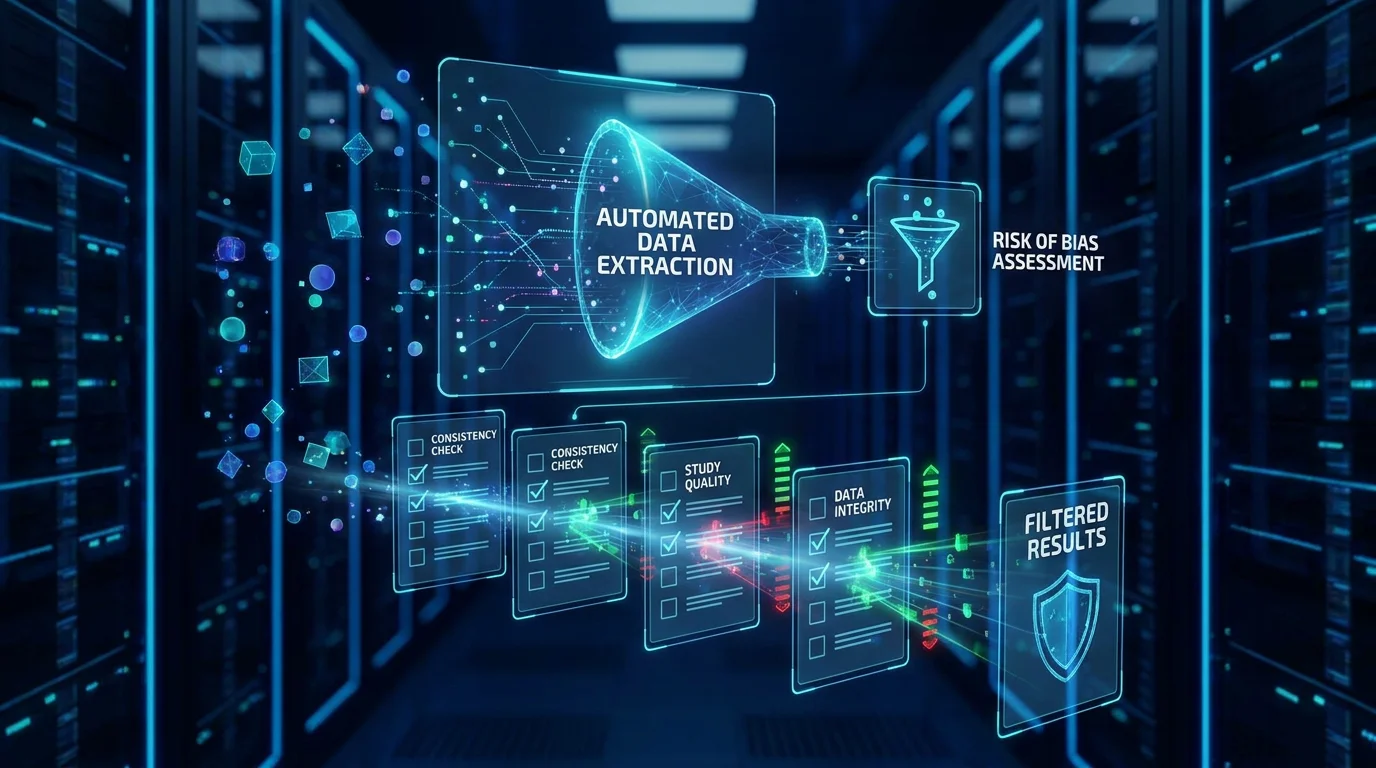

- Risk of Bias (RoB) Assessment: Advanced NLP models can now pre-populate RoB forms by extracting relevant passages from the methodology sections of RCTs, significantly speeding up the evaluation of study quality.

- Automated Data Extraction: Standardized tables can be generated automatically from PDF manuscripts, though human verification remains essential for complex primary outcomes.

Maintaining Methodological Rigor

The primary concern with AI-assisted reviews is the risk of "missing" critical evidence due to non-transparent ranking systems. To mitigate this, researchers must document the specific AI tools used, the training set size, and the inclusion/exclusion thresholds. This transparency allows peer reviewers to evaluate whether the automated sweep was sufficiently comprehensive.

The Lingcore SCI Advantage

At **Lingcore SCI**, we specialize in providing researchers with the tools needed for high-impact evidence synthesis. Our **Review Builder** and **Paper Analyzer** integrate validated AI models that cross-reference data points against **PubMed** and **Semantic Scholar**, ensuring that your systematic review is built on a foundation of verifiable data and rigorous analysis.

Conclusion

The integration of AI into systematic reviews is not a replacement for human expertise but a powerful extension of it. By automating the repetitive elements of literature synthesis, researchers can focus their intellectual energy on interpreting the evidence and deriving the clinical insights that drive progress in medical science.

LINGCORE SCI

LINGCORE SCI